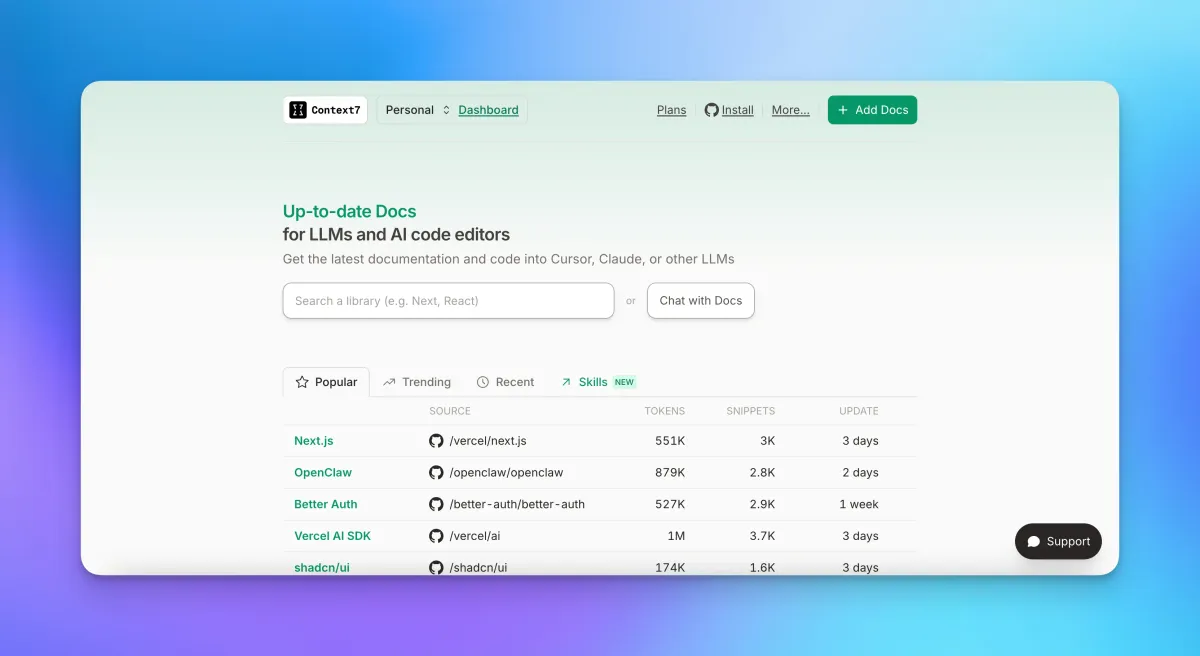

Context7 CLI: Ditch the MCP Server and Pipe Docs Directly Into Your AI Agent

Context7 now runs as a CLI with no MCP server needed. One command setup, 70% fewer tokens, and works with Claude Code, Ollama, and any LLM runtime.

If you've been using Context7 through MCP and wondering why it only works inside Cursor, that limitation is now gone. Context7 launched a CLI mode that lets any AI agent fetch up-to-date, version-specific documentation without an MCP server in sight.

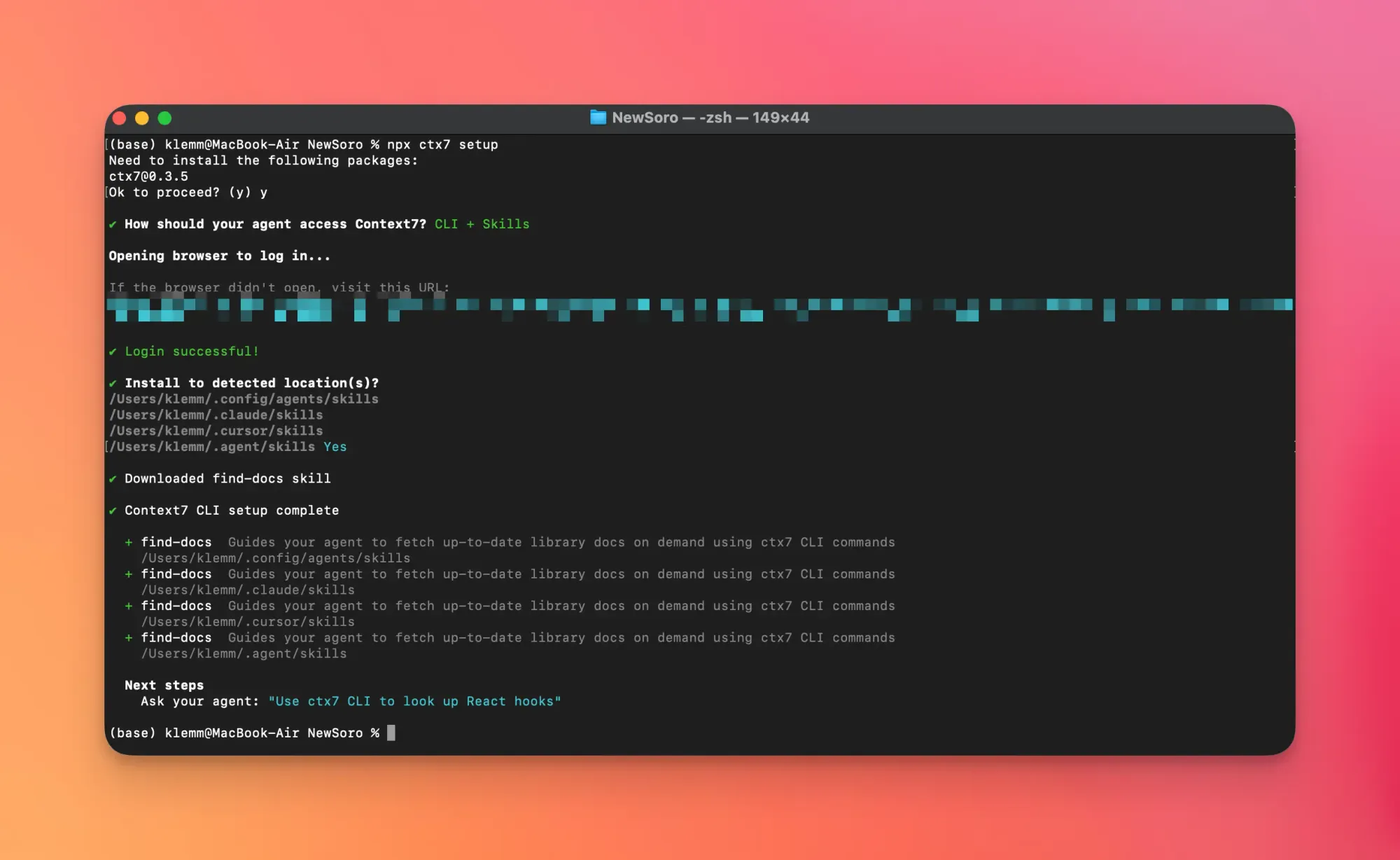

One command gets you started:

npx ctx7 setupThat installs a skill that guides your agent to pull docs using ctx7 CLI commands. No MCP config. No editor lock-in. Works with Claude Code, Ollama, llama.cpp, or whatever runtime you're actually using.

What Problem This Actually Solves

Context7's original value proposition is solid: instead of letting your LLM hallucinate deprecated APIs, it fetches real documentation at query time. The problem was always the delivery mechanism. MCP only works inside MCP-compatible editors. If your workflow lives in a terminal, a custom agent pipeline, or a local model setup, MCP was a wall.

MCP locks you into specific editors. A CLI just gives you text — pipe it wherever you want. That's the whole argument in two sentences.

The Token Efficiency Claim

Here's the number getting the most attention: reportedly 70% fewer tokens consumed when using CLI + Skills versus prompting an MCP-based agent with a verbose instruction like "use context7 mcp to understand how to implement this."

The mechanism makes sense. Instead of the main agent context ballooning with instructions and MCP protocol overhead, the agent delegates to a subagent that runs the ctx7 CLI command, fetches what's needed, and returns a tight result. The main context stays lean.

According to early community reports, this aligns with what people are actually experiencing — there's been no visible pushback on the 70% figure from people who've tested it. Whether it holds on complex multi-step tasks with heavy doc retrieval is still an open question.

Introducing Context7 CLI! 🎉

— Context7 (@Context7AI) March 13, 2026

The modern, token-friendly way to get up-to-date documentation for AI.

90-second demo 👇 pic.twitter.com/v8imQ7MItk

The Skills Ecosystem Already Has Momentum

Skills are the interesting part here, not just the CLI itself. Skills are reusable instruction sets — shareable, installable via npm-style commands — that tell an agent how to use a tool. Think of them as composable agent behaviors.

Community members are already building and sharing custom skills on top of ctx7. One example visible within days of launch:

npx skills add discountry/ritmex-skills --skill use-ctx7If you want a deeper look at how the Skills model works as a general pattern for AI agent tools, the complete guide to AI Agent Skills on Newsoro covers the broader ecosystem.

Security: The ContextCrush Flaw

There's a real caveat here that shouldn't be buried. A vulnerability dubbed "ContextCrush" was identified in Context7: attackers can slip malicious commands into AI agents by embedding them inside poisoned documentation content. Context7 fetches docs at query time and injects them into agent context — which is exactly what makes it useful, and exactly what makes this attack surface exist.

This is a prompt injection vector via supply chain. The doc source looks legitimate. The agent trusts it. The injected content gets executed.

For personal or small-team use on trusted libraries, the risk is manageable. For enterprise workflows or anything processing external or third-party documentation at scale, this is a reason to wait for a public patch and audit before deploying. It's the same tradeoff that exists for any tool that automatically injects external content into an agent's context window — Context7 is just a cleaner example of why that class of tool needs careful security consideration. (This dynamic is similar to concerns raised around AI security tooling more broadly.)

The Local Alternative: Librarian

If cloud-dependent doc fetching is a non-starter for you, Ian Nuttall built an alternative called Librarian. It ingests doc sites locally, uses local models, local-only database, and optionally exposes an MCP interface. No docs leave your machine.

I built Librarian* a CLI context7 alternative that ingests doc sites for AI agents to search

— Ian Nuttall (@iannuttall) January 5, 2026

- CLI-first

- Skill to use it

- Optional MCP

- Local-only db + models

- Progressive disclosure for context efficiency

*sorry @AmpCode for stealing your name!https://t.co/jcqrOK9xM4 pic.twitter.com/v1GikMiSzh

Librarian is the right call if you're air-gapped, privacy-sensitive, or just uncomfortable with the ContextCrush exposure. The tradeoff is setup complexity and the overhead of running local models — Context7's cloud approach wins on convenience for everyone else.

Who Should Switch Today

Switch if: You're building agentic workflows outside Cursor. You use Claude Code, Ollama, llama.cpp, or a custom pipeline. You've been blocked by MCP's editor requirement and just worked around it with copy-paste or manual doc lookups. The setup is one command and the token efficiency gains are real enough that the upgrade cost is effectively zero.

Hold off if: You have a working Context7 MCP setup in Cursor and no friction in your current workflow — the migration doesn't buy you much. Or you're on a security-sensitive team where ContextCrush is a blocker until an official patch ships.

Skip Context7 entirely if: You need fully offline, air-gapped operation. Use Librarian instead.

The Bigger Pattern

The community hot take that's gaining traction: MCP is becoming legacy infrastructure faster than anyone expected. The argument is that any tool delivering data to an AI agent should output plain text that can be piped anywhere — and MCP's editor-coupling is an architectural mistake that CLI tools don't make.

Whether that's right long-term is debatable. But the fact that Context7's own team shipped a CLI mode as a first-class option — not an afterthought — suggests they read the same signal from their users.

The Skills ecosystem is the subplot worth watching. If npx skills add becomes a standard way to distribute agent behaviors the way npm install distributes libraries, Context7 will be remembered less as a doc-fetching tool and more as the thing that made composable agent skills a normal part of the developer stack.

That's either a big deal or a bubble. Run npx ctx7 setup and form your own opinion.

Official repo: github.com/upstash/context7