DeepSeek V4: What We Know, What's Rumored, and Why It's Still Not Here

DeepSeek V4 was expected in February 2026 but still hasn't launched. Here's everything we know about its specs, delays, and why the AI world is watching closely.

- DeepSeek V4 was widely expected to launch in mid-February 2026, but as of early March 2026, it still hasn't arrived.

- Rumors point to a massive model: reportedly 1 trillion parameters, a 1 million token context window, and native multimodal capabilities.

- Multiple leaks suggest it outperforms current versions of ChatGPT and Claude on coding tasks, though none of this is officially confirmed.

- DeepSeek and Qwen together have grown from roughly 1% to 15% of global AI market share in a single year — the fastest adoption curve on record.

- The AI community is actively watching for a release, with several major model drops from Google, OpenAI, Anthropic, and xAI also expected around the same window.

The Model That Keeps Not Arriving

For the past few months, DeepSeek V4 has been the most anticipated AI release that never quite shows up. The original target was mid-February 2026. Then the Lunar New Year window came and went on February 17. Then late February passed. And as of early March 2026, there's still no official launch.

That hasn't stopped the speculation from running hot. Across AI research circles, tech investors, and developer communities, DeepSeek V4 has become one of those rare releases that generates its own gravitational pull — drawing attention just by being imminent. The company's last major release, DeepSeek R1, rattled markets and erased hundreds of billions in market cap from AI infrastructure stocks in a single day. People are paying attention this time.

So what do we actually know? A mix of confirmed details, credible rumors, and a fair amount of hype. Here's how to separate them.

What the Rumors Say About the Model Itself

The most detailed picture of DeepSeek V4 comes from a combination of early reporting and third-party analysis sites that have been tracking leaks. According to those sources, V4 is shaping up to be one of the most ambitious AI models built to date — on paper, at least.

1 Trillion Parameters

Parameters are roughly the number of "weights" or learned values inside a neural network — more of them generally means more capacity to understand complex tasks. According to early reporting, DeepSeek V4 is built around 1 trillion parameters, which would put it firmly in the frontier tier alongside the largest models from OpenAI and Google.

A 1 Million Token Context Window

The context window is how much text a model can read and reason over at once. One million tokens is an enormous amount — roughly 750,000 words, or the equivalent of several full-length novels. If accurate, this would make V4 capable of processing entire codebases, long legal documents, or extended research papers in a single pass.

Native Multimodal Capabilities

Multimodal means the model can handle more than just text — images, potentially audio, and other input types. DeepSeek V4 is reportedly being built with multimodal support from the ground up, rather than bolted on after the fact.

Open-Source and Cheaper to Run

One of the defining features of DeepSeek's previous models has been their open-weight licensing — meaning developers can download and run them locally rather than paying per API call. According to reporting, V4 is expected to follow the same model. Some sources describe it as "10X efficient" compared to competing closed models, though that specific claim comes from social media speculation rather than verified benchmarks.

DeepSeek's broader trajectory supports the efficiency angle. The company has consistently built models that punch above their weight in terms of cost-to-performance ratio, which is a large part of why their market share has grown so quickly.

The Coding Angle: Why Developers Are Especially Interested

DeepSeek's earlier models, particularly the R1 series, earned a strong reputation among software developers for code generation and reasoning. V4 is reportedly designed to push that further. According to multiple sources, the model has been specifically built around memory architecture improvements and sparse attention mechanisms — technical approaches that tend to benefit complex, multi-step tasks like writing and debugging code.

One researcher noted on X that Anthropic recently restricted Claude's access in several third-party coding tools, which could push developers toward alternatives. The same post flagged DeepSeek V4 as one of the most likely beneficiaries.

Anthropic blocked Claude subs in third-party apps like OpenCode, and reportedly cut off xAI and OpenAI access.

— Yuchen Jin (@Yuchenj_UW) January 9, 2026

Claude and Claude Code are great, but not 10x better yet. This will only push other labs to move faster on their coding models/agents.

DeepSeek V4 is rumored to drop…

The Market Reaction Everyone Is Anticipating

Part of what makes the V4 release unusually charged is what happened the last time DeepSeek dropped a major model. When DeepSeek R1 launched, it triggered a sharp sell-off in AI infrastructure stocks — particularly Nvidia — because it demonstrated that frontier-level AI could be built far more cheaply than the market had assumed. The logic being: if you don't need as many chips to train a world-class model, the demand outlook for expensive hardware looks shakier.

Some voices on social media have leaned hard into this narrative ahead of V4's release, pointing to broader macro uncertainty — rising oil prices, geopolitical tension — as a backdrop that could amplify any market reaction.

🚨 CHINA IS ABOUT TO DROP THE BIGGEST BOMB NO ONE IS READY FOR

— 🇨🇳 Wei Zhao 赵伟 (@antmillionsbot) March 3, 2026

DeepSeek V4 launches THIS WEEK.

Multimodal. Open source. 10X efficient. Built to EXCLUDE NVIDIA.

Rumored to beat Claude Opus 4.6.

$650B in AI capex is sitting on the table for 2026.

If DeepSeek proves frontier AI… pic.twitter.com/yYKAwxVH9W

It's worth applying some skepticism here. That kind of framing — "nobody is prepared," "NVIDIA collapses" — is common in financial social media and tends to be more dramatic than events actually play out. What is more grounded is that DeepSeek's efficiency story is real, and if V4 delivers on its rumored specs, it will likely renew the same questions about AI infrastructure spending that R1 raised in early 2025.

A Crowded Release Window

DeepSeek V4 isn't landing in a vacuum. The coming weeks are shaping up to be one of the most competitive release periods in AI history, with major models expected from Google DeepMind, OpenAI, Anthropic, and xAI all reportedly arriving around the same time. That makes it harder for any single release to dominate the conversation — but it also raises the stakes for each lab to ship something genuinely impressive.

The Broader Context: DeepSeek's Rise Is Already Remarkable

Even before V4 ships, DeepSeek's growth over the past year tells an interesting story. According to one analysis, DeepSeek and Qwen (another Chinese open-source AI lab) went from a combined 1% of global AI market share in January 2025 to roughly 15% by January 2026. That's the fastest adoption curve on record for AI models, and it happened largely on the back of open-weight releases that developers could actually run and build on top of.

V4, if it launches as described, would be the most capable open-weight model either lab has ever released — and according to that same reporting, it would not just be catching up to proprietary models from OpenAI and Anthropic, but competing at the same level or above them on certain tasks.

Meanwhile, the broader AI community keeps producing interesting work while waiting for V4. Indian AI lab Sarvam recently released two open-weight reasoning models — Sarvam 30B and 105B — that drew comparisons to models from OpenAI and Qwen. The 105B variant even adopted DeepSeek's own attention architecture, a sign of how influential DeepSeek's technical approaches have become.

While waiting for DeepSeek V4 we got two very strong open-weight LLMs from India yesterday.

— Sebastian Raschka (@rasbt) March 7, 2026

There are two size flavors, Sarvam 30B and Sarvam 105B model (both reasoning models).

Interestingly, the smaller 30B model uses “classic” Grouped Query Attention (GQA), whereas the… https://t.co/OiJVkDCYNz pic.twitter.com/0uqmLxofRE

Why Is It Late?

There's no official explanation from DeepSeek for the delay. The company hasn't publicly acknowledged a specific release date to begin with — the February window came from industry reporting, leaks, and inference from past release patterns (DeepSeek has a history of shipping around Chinese holidays). The most likely explanations are standard: final safety evaluations, infrastructure preparation, or last-minute performance tuning. None of that is unusual for a model at this scale.

What is a little unusual is the volume of anticipation. V4 has been talked about as a major release for months, which means the longer it takes, the more pressure there is to deliver something that lives up to the buildup.

Frequently Asked Questions

When is DeepSeek V4 actually launching?

As of early March 2026, there is no confirmed release date. The mid-February 2026 target has passed without a launch. DeepSeek has not officially announced a date.

What makes DeepSeek V4 different from V3?

Based on available reporting, V4 is expected to be significantly larger (reportedly 1 trillion parameters), support a much longer context window (1 million tokens), and include native multimodal capabilities — meaning it can handle images and other inputs, not just text.

Will DeepSeek V4 be open source?

Based on DeepSeek's track record and early reporting, V4 is expected to be released as an open-weight model, meaning developers can download and run it locally. Nothing has been officially confirmed.

Could DeepSeek V4 affect Nvidia's stock again?

It's possible. DeepSeek's previous releases triggered significant sell-offs in AI hardware stocks because they demonstrated that frontier AI could be built more cheaply than the market expected. If V4 reinforces that trend, similar reactions are plausible — though market conditions and timing will play a large role.

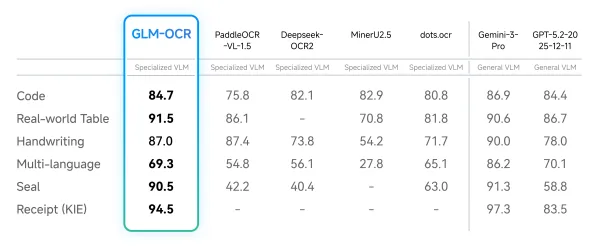

How does DeepSeek V4 compare to ChatGPT and Claude?

According to leaks and early reports, V4 is rumored to outperform current versions of ChatGPT and Claude, particularly on coding tasks. However, no official benchmarks have been published, and these claims should be treated as unverified until the model actually launches.

Bottom Line

DeepSeek V4 is real, it's coming, and based on the available evidence it could be one of the more significant open-source AI releases in recent memory. The combination of scale (1 trillion parameters), efficiency, multimodal support, and open-weight licensing — if it all holds up — would give developers a genuinely powerful alternative to closed models from OpenAI and Anthropic. DeepSeek has earned enough credibility with its previous releases that this isn't just hype.

That said, the model hasn't launched yet, and the gap between rumored specs and actual performance is often wider than pre-release coverage suggests. The best approach right now is to stay curious, stay skeptical, and wait for the actual benchmarks. When V4 does ship, the results will speak for themselves.