GLM-5-Turbo: Z.ai's Closed-Source Agent Model Built Exclusively for OpenClaw

GLM-5-Turbo is faster and cheaper than prior GLM models — but it's closed-source, OpenClaw-only, and its ecosystem partner's founder just joined OpenAI.

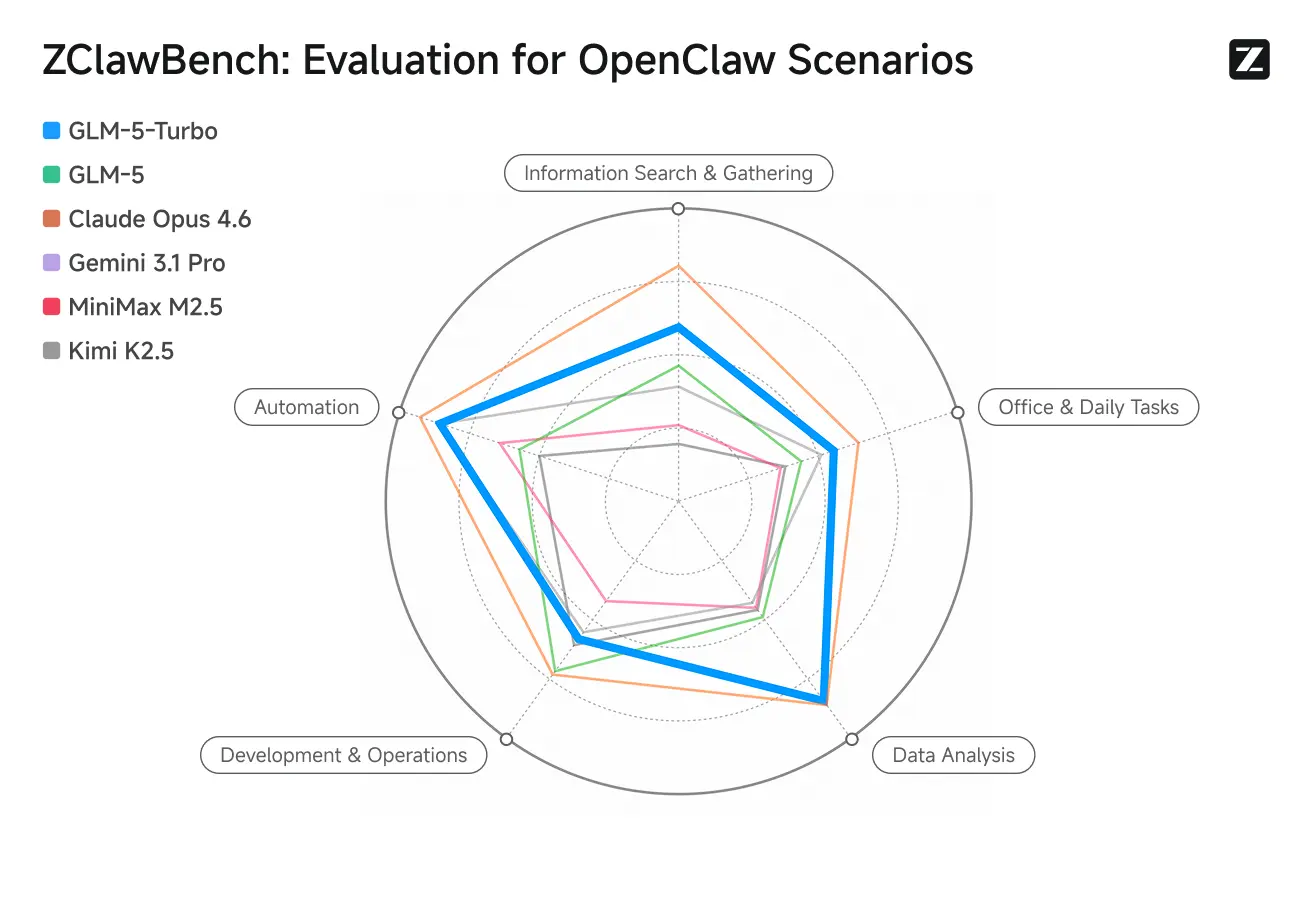

GLM-5-Turbo scores 77.8 on SWE-bench Verified — putting it just behind Claude Opus and ahead of Gemini 2.5 Pro — but it ships with no public weights, runs best inside exactly one agentic framework. That combination alone tells you this is not a standard model release.

GLM-5-Turbo is a new model from Z.ai (also known as Zhipu AI), released on March 15, 2026. It is explicitly an experimental variant of GLM-5, designed and optimized from the ground up for the OpenClaw agentic framework and "claw"-style task workflows. It is not a general-purpose API endpoint. If you are not running OpenClaw, most of what makes this model interesting will not apply to you.

That's a narrow target. Here's what happened when it hit it.

What Was Claimed vs. What Tested

The stated thesis: GLM-5-Turbo is faster and cheaper than prior GLM models, with latency reductions specifically requested by developers building production agents. The benchmark claim is 77.8 on SWE-bench Verified — one of the more reliable proxies for real software engineering capability, not a curated toy task.

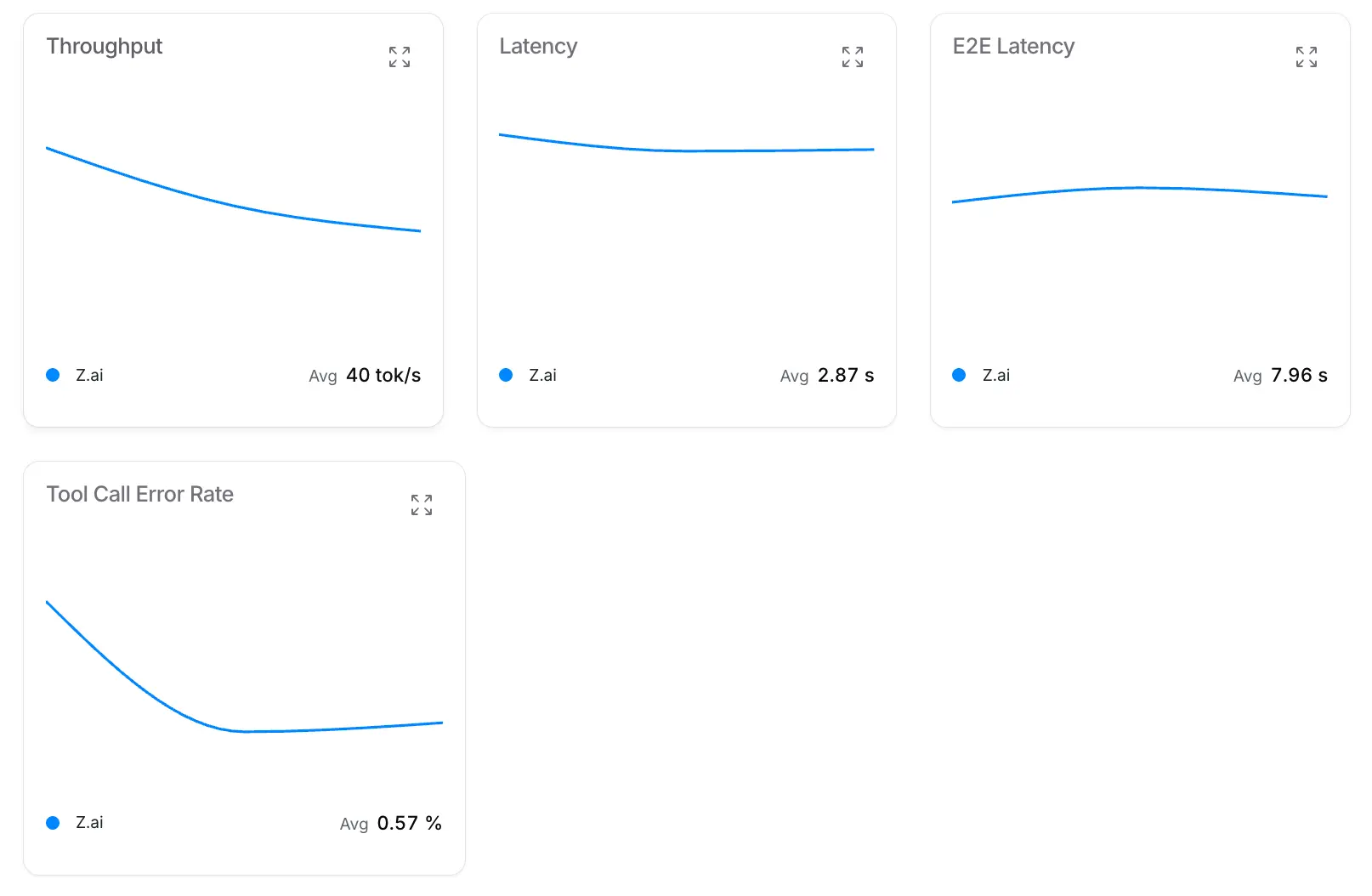

In practice, testers running it inside OpenClaw reported prompt-to-output times short enough that a website redesign task completed within 60 seconds of submission. That's not latency marketing — that's a measurable timestamp difference between prompt input and deployed output. The model runs at approximately 52 tokens per second, with a 200k context window. For agent loops that use memory compaction (which OpenClaw does), the context ceiling is less of a bottleneck than it looks on paper.

One measurable quirk: GLM-5-Turbo consumes tokens faster than older GLM models. If you're on Z.ai's subscription plans, your token budget depletes more quickly. The model also has a toggleable thinking mode — for maximum speed, turn it off; for complex multi-step tasks, leave it on. That's a reasonable design choice for an agent-first model, but it's something to configure deliberately rather than assume.

The model is currently available on Z.ai's Max plan and via OpenRouter. Pro plan access is expected by end of March 2026.

Introducing GLM-5-Turbo: A high-speed variant of GLM-5, excellent in agent-driven environments such as OpenClaw.

— Z.ai (@Zai_org) March 15, 2026

Coding Plan Max: https://t.co/E63z53nXOX

OpenRouter: https://t.co/ldgN3uiI2M

API: https://t.co/13bVk0Vpup pic.twitter.com/kNKZMDGUbm

The Closed-Source Decision

This is the friction point. The GLM family built its developer reputation on openness — the base GLM-5 model is fully open-source, with 744 billion total parameters (roughly 40 billion active at inference time via mixture-of-experts), a 200k context window, downloadable weights on HuggingFace, and benchmark results that lead all open-source models globally on reasoning, coding, and agentic tasks.

GLM-5-Turbo ships with none of that. No weights. No fine-tuning access. No on-premise deployment option. Closed API only.

Z.ai's official position, posted directly:

Note: As an experimental version, GLM-5-Turbo is currently closed-source. All capabilities and findings will be incorporated into our next open-source model release.

— Z.ai (@Zai_org) March 15, 2026

The commitment to fold findings into a future open-source release is a reasonable explanation. It does not fully resolve the tension. Promising to open-source something "later" is structurally different from open-sourcing it now, and developers who built trust on the GLM family's openness are right to notice the difference.

The OpenClaw Dependency and the Founder Risk

Building your flagship turbo model exclusively around one agentic framework is a significant bet. It pays off if OpenClaw becomes the dominant agent execution layer for enterprise workflows. It becomes a liability if the framework fragments, stalls, or loses developer mindshare.

There's a specific wrinkle here worth flagging: OpenClaw's creator, Peter Steinberger, has joined OpenAI. That's the kind of fact that doesn't change today's benchmarks but does affect how you think about ecosystem durability. Z.ai is tying its turbo model to a framework whose founder now works at a direct competitor. Zhipu's stock surged nearly 9% on the GLM-5-Turbo announcement — the market read it as validation of the OpenClaw strategy. That confidence may be warranted. The founder risk is real either way.

Speed as a Real Differentiator, Not Marketing

Developer reaction on X is broadly positive, with a specific quality worth noting: people are treating the latency improvements as technically substantive, not as branding.

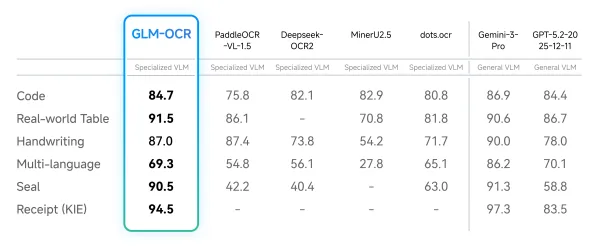

If the 52 tokens/second figure holds under production load — and that's an important conditional — this sits meaningfully above what most frontier closed-model API endpoints deliver for agent tasks. The comparison point most developers will reach for is Claude or GPT endpoints via OpenRouter. On raw throughput and cost, GLM-5-Turbo appears to have an edge. On multimodal capability, it doesn't compete: this is currently a text-only model. That's a hard ceiling for workflows involving images, documents, or screenshots — use cases where something like GLM-OCR would handle the structured extraction layer before passing to a text model.

GLM-5 (Base) vs. GLM-5-Turbo: The Actual Distinction

These are different products solving different problems. GLM-5 (the open-source base) achieves best-in-class performance among open-source models globally across reasoning, coding, and agentic tasks — representing a significant jump over GLM-4.7 driven by improvements to both pre-training and post-training. You can download it, fine-tune it, run it on-premise.

GLM-5-Turbo is none of that. It is a closed, API-only, agent-optimized variant with faster inference, lower cost per call, and no weights. The GitHub repo for GLM-5 describes the base model's trajectory clearly; GLM-5-Turbo is a separate commercial product layered on top of that research foundation.

If your workflow requires open weights — for fine-tuning, auditing, or deployment behind your own firewall — GLM-5-Turbo does not serve that need. The base GLM-5 does. This distinction is not always clear in the coverage, and conflating them produces the wrong conclusions.

The Sunday Drop

The release timing — a Sunday — drew specific attention from observers. It is not accidental. Weekend AI releases hit news cycles when competitor announcements are quiet, and they generate concentrated social discussion that carries through Monday. This is increasingly deliberate release strategy in the AI space, and it worked: the story dominated weekend AI coverage and contributed to Zhipu's ~9% stock surge when markets opened.

Whether that's a sign of tactical savvy or a signal that Z.ai is optimizing for news velocity over developer readiness is a fair question. The model was available on OpenRouter at launch, so at minimum the infrastructure was ready.

My Take

GLM-5-Turbo is a real product with real performance numbers, not a benchmark press release. For OpenClaw users running long, complex automations via API, the speed and cost improvements are worth testing against your current setup. The SWE-bench score is credible. The latency claims appear to hold in early testing.

The closed-source decision is a calculated risk on Z.ai's part, and the OpenClaw founder situation adds a variable that didn't exist a few months ago. The 9% stock pop reflects market confidence in the strategy. Developer confidence will depend on whether the promised open-source follow-up actually ships, and when.

The thesis embedded in this release — that the next wave of AI value runs through agent workflows, not chatbots — is a reasonable bet. Z.ai is placing it specifically on OpenClaw-style task execution. If that bet is right, being early and optimized for it matters. If the OpenClaw ecosystem fragments, the "Turbo" label won't save the positioning.