OpenAI to Acquire Promptfoo: What It Means for AI Security

OpenAI is buying AI security startup Promptfoo to embed red-teaming and security testing into its Frontier platform. Here's what it means and what comes next.

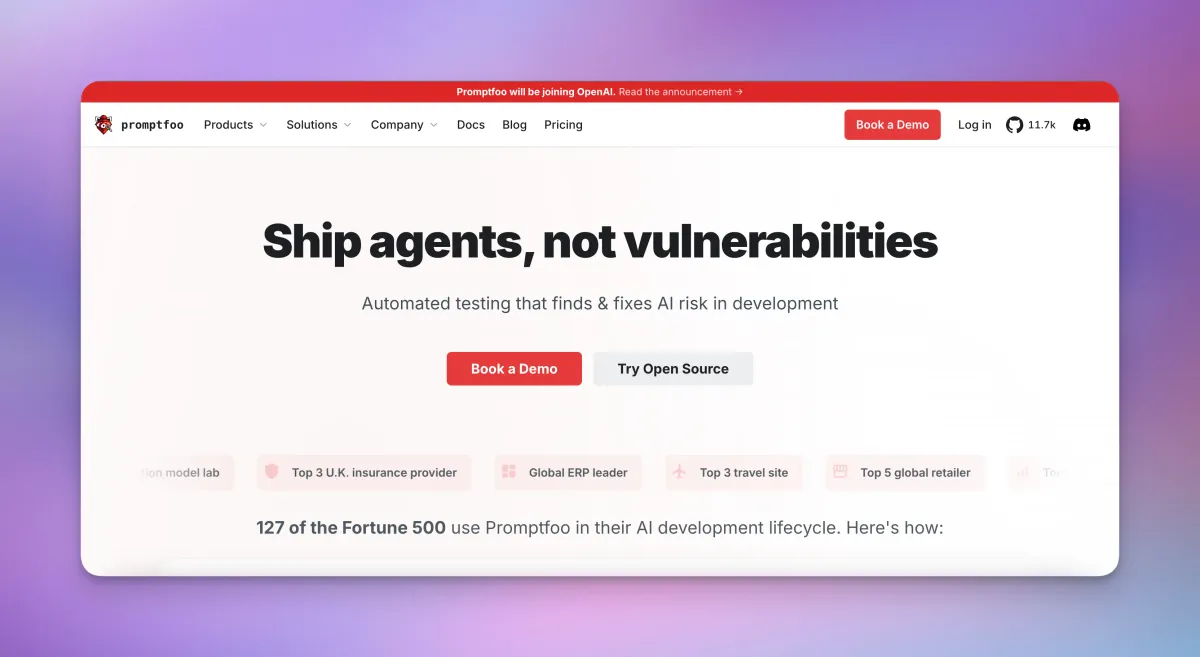

- OpenAI is acquiring Promptfoo, an AI security startup that helps enterprises find and fix vulnerabilities in AI systems before deployment.

- Promptfoo's tools are already used by more than 25% of Fortune 500 companies and over 125,000 developers.

- The technology will be integrated directly into OpenAI Frontier, OpenAI's platform for building and running AI agents in enterprise workflows.

- The open-source version of Promptfoo will continue to exist after the deal closes.

- Financial terms were not disclosed.

OpenAI Is Getting Serious About Security

On March 9, 2026, OpenAI announced it has agreed to acquire Promptfoo, a cybersecurity startup focused on testing and securing AI systems. The deal signals that as OpenAI pushes deeper into enterprise territory — selling AI agents that work autonomously inside real business workflows — it knows security can no longer be an afterthought.

Promptfoo was founded in 2024 by Ian Webster and Michael D'Angelo. Despite being a young company, it built a strong reputation quickly. Its tools help developers and security teams run "red-teaming" exercises on AI systems — that's the practice of deliberately attacking your own system to find weaknesses before bad actors do. By the time of the acquisition, Promptfoo was trusted by over 127 Fortune 500 companies spanning healthcare, retail, telecoms, and enterprise software.

Once the deal closes, Promptfoo's capabilities will be folded directly into OpenAI Frontier, the company's platform designed for businesses building AI coworkers — autonomous agents that can take actions, access data, and operate inside real enterprise systems.

OpenAI has agreed to acquire cybersecurity startup Promptfoo

— Evan (@StockMKTNewz) March 9, 2026

Financial Terms of the deal were not disclosed - CNBC pic.twitter.com/lzsLaFuWQF

What Does Promptfoo Actually Do?

To understand why OpenAI wanted Promptfoo, it helps to understand what AI security testing actually involves. When a company deploys an AI agent — say, a customer service bot or an internal legal assistant — that agent can be manipulated. Attackers can craft inputs designed to trick the AI into ignoring its rules, leaking private data, or taking actions it shouldn't. These are called prompt injections and jailbreaks.

Promptfoo automates the process of finding these weaknesses. It simulates real users and adversarial attackers, generating thousands of context-aware attacks tailored to a specific AI application. It tests for things like:

- Direct and indirect prompt injections

- Jailbreaks designed to bypass guardrails

- Data and personally identifiable information (PII) leaks

- Insecure tool use in AI agents

- Business rule violations

- Toxic content generation

What made Promptfoo stand out wasn't just the breadth of tests — it was how it fit into developer workflows. The platform integrates with CI/CD pipelines (the automated systems developers use to test and ship code), GitHub, GitLab, and agent frameworks. Security findings show up directly in pull requests, so developers can fix issues without switching tools. It essentially brings security into the moment code is written, rather than treating it as a separate audit step at the end.

Why OpenAI Needed This

OpenAI's Frontier platform is built around AI agents — systems that don't just answer questions but take real actions. They can browse the web, write code, send emails, query databases, and interact with other software. That level of capability is genuinely useful, but it also creates a much larger attack surface than a simple chatbot.

As OpenAI's CTO of B2B Applications, Srinivas Narayanan, put it in the official announcement: "Promptfoo brings deep engineering expertise in evaluating, securing, and testing AI systems at enterprise scale. Their work helps businesses deploy secure and reliable AI applications, and we're excited to bring these capabilities directly into Frontier."

For enterprises, deploying AI agents at scale requires more than just capable models. It requires systematic ways to test agent behavior, detect risks before anything goes live, and maintain records for governance and compliance purposes. Regulators and risk teams need to know: what did this agent do, why did it do it, and how was it tested? Promptfoo's reporting and traceability tools help answer those questions.

What Gets Built Into Frontier

According to OpenAI's announcement, three capabilities will be developed as part of integrating Promptfoo into Frontier:

Security Testing Built Into the Platform

Automated red-teaming will become a native feature of Frontier, not a separate tool you have to bolt on. Enterprises will be able to scan for prompt injections, jailbreaks, data leaks, tool misuse, and out-of-policy agent behaviors as a standard part of building on the platform.

Security Integrated Into Development Workflows

Rather than a one-time audit, Frontier will embed security checks throughout the development process. The goal is to catch and fix risks earlier — during development, not after deployment.

Oversight and Accountability

Integrated reporting and traceability tools will help organizations document their testing, track changes over time, and meet growing regulatory and compliance expectations around AI governance.

The Open-Source Project Continues

One notable detail from the announcement: the Promptfoo open-source project will continue. For the 125,000-plus developers who use Promptfoo's free, open-source CLI (command-line interface) to test their own AI applications, the plan is for that work to carry on even as the team joins OpenAI.

As Promptfoo's own blog post noted, "The open-source project will continue as Ian Webster and Michael D'Angelo begin a new chapter." This matters because the open-source community has been a significant part of Promptfoo's strength — its real-time threat intelligence draws from a user base of over 300,000 people, contributing data on new attack vectors as they emerge.

A Broader Pattern: OpenAI Builds Out Its Enterprise Stack

This acquisition fits a clear pattern in OpenAI's recent moves. The company has been building out the infrastructure layer that large enterprises need to deploy AI responsibly — not just offering models, but offering the full stack around them. Earlier in 2026, OpenAI announced a strategic partnership with Amazon and an agreement with the Department of War, both pointing toward large-scale institutional deployment.

Buying Promptfoo is a direct acknowledgment that security is now a core part of that enterprise offering, not an optional add-on. As Forbes noted in its coverage, the acquisition signals that "agent safety is now table stakes" for any serious enterprise AI platform.

FAQ

What is Promptfoo?

Promptfoo is an AI security startup founded in 2024 that provides tools for testing and red-teaming AI systems. It helps developers and security teams find vulnerabilities — like prompt injections and jailbreaks — in AI applications before they go live. It is used by over 127 Fortune 500 companies and has a widely used open-source tool for developers.

How much did OpenAI pay for Promptfoo?

Financial terms of the deal were not disclosed by either party.

What is red-teaming in AI?

Red-teaming is a security practice where you deliberately try to attack or break your own system to find weaknesses. In the context of AI, it means generating adversarial inputs — crafted prompts designed to trick the AI into doing something it shouldn't — to expose vulnerabilities before real attackers find them.

Will Promptfoo's open-source tools still be available?

Yes. According to both OpenAI's announcement and Promptfoo's own blog post, the open-source project will continue after the acquisition closes.

What is OpenAI Frontier?

OpenAI Frontier is OpenAI's enterprise platform for building and operating AI agents — autonomous AI systems that can take real actions within business workflows. Promptfoo's security testing tools will be integrated directly into this platform once the deal closes.

Bottom Line

OpenAI acquiring Promptfoo is a practical move that reflects where the AI industry is heading. As AI agents become more capable and more deeply embedded in enterprise systems, the security risks grow with them. Buying a company that 127 Fortune 500 firms already trust to find those risks is a faster path to solving that problem than building it from scratch.

For enterprises already using or evaluating OpenAI's Frontier platform, this means security testing could soon be built into the tools they already use — rather than requiring a separate vendor relationship. For developers using Promptfoo's open-source tools, the immediate impact appears limited, as that project is set to continue. The real test will be how deeply and how quickly OpenAI can integrate these capabilities into Frontier, and whether that integration lives up to the promise of making AI agents genuinely safer to deploy at scale.