OpenAI GPT-5.4: Everything You Need to Know About the New Model

OpenAI released GPT-5.4 on March 5, 2026. Here's what's new: 1M-token context, native computer use, two new variants, and what developers actually think.

- OpenAI released GPT-5.4 on March 5, 2026, in two variants: GPT-5.4 Thinking and GPT-5.4 Pro.

- The model supports a 1 million token context window — meaning it can process far more text in a single session than previous versions.

- GPT-5.4 introduces native computer use, allowing it to operate across apps and devices autonomously.

- OpenAI reported a 33% reduction in factual errors compared to GPT-5.2, according to Wikipedia's technical summary.

- Neither variant is available to free-tier users, and high context usage above 272k tokens triggers higher pricing.

What Is GPT-5.4?

OpenAI launched GPT-5.4 on March 5, 2026, describing it as its "most capable and efficient frontier model for professional work." The release combines advances in reasoning, coding, and what OpenAI calls agentic workflows — the ability for an AI to take a series of actions toward a goal without needing a human to guide every step.

Unlike some recent OpenAI releases that felt like narrow, incremental tweaks, GPT-5.4 appears to be more of a consolidation. According to the DEV Community breakdown, it is the first mainline OpenAI reasoning model to bring together frontier professional-work quality, the coding strengths from GPT-5.3-Codex, native computer use, and a 1.05 million-token context window all in one package.

The release came with two versions: GPT-5.4 Thinking, which is what most ChatGPT users will encounter, and GPT-5.4 Pro, a more powerful tier. Neither is available on OpenAI's free plan.

The Two Variants: Thinking and Pro

The naming here gets a little confusing, so it's worth slowing down. OpenAI released GPT-5.4 Thinking as the primary version inside ChatGPT. There is no GPT-5.4 Instant and, notably, no GPT-5.4 Codex variant. GPT-5.4 Pro launched the same day as a higher-end option for more demanding workloads.

This matters because it represents a shift from OpenAI's recent pattern. Previously, the company released separate "Codex" model variants — versions that had been fine-tuned for long-running code tasks in tools like the Codex CLI. With GPT-5.4, those capabilities appear to have been folded into the base model itself. The Codex name, by this reading, now refers to the product surface — the CLI, desktop app, and web interface — rather than a distinct model underneath.

What's Actually New

A Much Larger Context Window

One of the headline changes is context window size — how much text the model can read and work with at once. GPT-5.4 supports up to one million tokens. To put that in perspective, a token is roughly three-quarters of a word, so one million tokens is an enormous amount of material: entire codebases, lengthy legal documents, or extended research files can now be fed to the model in a single session.

There is a pricing caveat. Input beyond 272,000 tokens is billed at 2x the standard input rate and 1.5x the output rate. Cached input tokens (text the model has already processed and stored) remain cheap, and OpenAI does not charge extra for caching — a point developers have noted favorably compared to some competitors.

Native Computer Use

GPT-5.4 can now interact directly with apps and operating system interfaces, not just generate text responses. According to The Verge, this native computer use capability is a significant step toward autonomous AI agents — systems that can perform multi-step jobs across your device without manual handholding at each stage. OpenAI also added a new search mechanism that lets the model automatically find the right tools an application needs for a given task, according to SiliconAngle.

Better Steering Mid-Task

One behavior change that developers will notice immediately: GPT-5.4 handles interruptions and new instructions much more gracefully. With earlier models, inserting a new message while the model was mid-task — say, adding a sixth item to a list it was already working through — would often cause it to drop everything and focus only on the new input, forgetting the original five tasks. According to early hands-on testing, GPT-5.4 handles this significantly better, incorporating the new instruction without abandoning prior work.

The Thinking variant also shows more of its reasoning process up front, and according to Ars Technica, users can prompt it to change course in the middle of that reasoning — a level of real-time control that wasn't available in the same way before.

More Efficient Reasoning Tokens

Reasoning models "think" before they respond, and that thinking costs tokens — which means it costs money and takes time. GPT-5.4 is reportedly much more token-efficient in how it reasons. According to early benchmark testing on a tool called Skatebench, the model used around 500 tokens on medium-difficulty tasks and about 1,100 on high-difficulty tasks. On extreme difficulty it still uses more — around 5,400 tokens per response — but efficiency improvements on the mid-range tasks are notable.

Vision Capabilities

The model's ability to understand images, diagrams, and dense visual documents appears to be substantially improved. Early users have been particularly vocal about this.

OpenAI solved vision with GPT-5.4, not sure what kind of magic they used but this is actually insane.

— Raphaël Dabadie🇫🇷 (@RaphaelDabadie) March 8, 2026

Miles ahead any model I tried for reading complex, information heavy documents.

Not even close

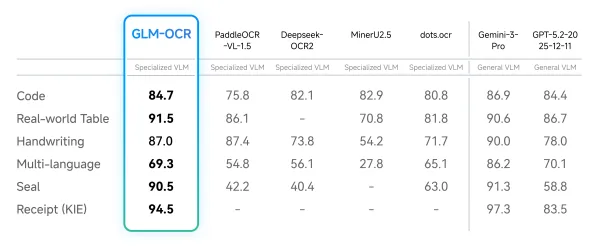

Benchmark Performance

On benchmarks, OpenAI reported that GPT-5.4 hits 83% on a pro-level knowledge benchmark, according to Interesting Engineering. Wikipedia's technical summary also notes a 33% reduction in factual errors compared to GPT-5.2. OpenAI's own blog describes it as their most capable and efficient frontier model, with state-of-the-art performance in coding, computer use, and tool search.

What It's Still Not Great At

No model is perfect, and GPT-5.4 has some clear limitations that early users have already flagged. Design work — particularly generating well-structured PowerPoint presentations — appears to be a weak spot. One developer found that giving GPT-5.4 better tooling instructions (prompted via Claude) dramatically improved slide output, suggesting the issue may be workflow and toolchain rather than raw capability.

GPT-5.4 is fantastic at many things, but not design. This includes PowerPoint design in ChatGPT. Like, really bad. And yet...

— Simon Smith (@_simonsmith) March 8, 2026

Turns out it's not a model issue. I had Claude Opus 4.6 give it instructions to improve its slide design, and it made something elegant.

I asked it to… pic.twitter.com/P3foBuLbx0

Creative writing is another area drawing criticism. Some writers report that content guardrails — the model's built-in filters that restrict certain types of content — have become stricter with GPT-5.4, making it harder to write fiction that involves tension, conflict, or morally complex characters.

GPT 5.4 guardrails are even worse than 5.2. OpenAI is straight up killing creative writing.

— Kenshi (@kenshii_ai) March 9, 2026

OpenAI took their strongest model and made the nannybot restrictions stricter than ever.

Real human emotion, tension, and natural storytelling now trigger instant blocks.

The best AI… pic.twitter.com/2BjfDYI6cq

Pricing and Access

GPT-5.4 is not available on OpenAI's free tier. Both the Thinking and Pro variants require a paid subscription. For API users, the extended context pricing kicks in above 272,000 input tokens: 2x input cost and 1.5x output cost. Cached input tokens remain cheap and are not subject to additional caching fees.

OpenAI offered some developers with early access a complimentary year of their Pro subscription — worth around $2,400 — though at least one developer with early access publicly declined it to avoid any appearance of bias.

Frequently Asked Questions

When was GPT-5.4 released?

OpenAI released GPT-5.4 on March 5, 2026.

What is the difference between GPT-5.4 Thinking and GPT-5.4 Pro?

GPT-5.4 Thinking is the standard version available inside ChatGPT that shows its reasoning process. GPT-5.4 Pro is a higher-tier version designed for more demanding workloads. Both launched on the same day. Neither is available to free-tier users.

What happened to GPT-5.3 Thinking?

There was no GPT-5.3 Thinking variant. OpenAI released GPT-5.3 Codex first, followed by GPT-5.3 Instant, and then jumped to GPT-5.4 Thinking. The reasoning model numbering skipped 5.3 Thinking entirely.

What is the context window for GPT-5.4?

GPT-5.4 supports up to one million tokens of context via the API. Input above 272,000 tokens is billed at higher rates: 2x for input and 1.5x for output tokens.

Is GPT-5.4 available for free?

No. According to Wikipedia's summary of the release, neither GPT-5.4 Thinking nor GPT-5.4 Pro is available to free-tier users.

Bottom Line

GPT-5.4 feels like a deliberate consolidation rather than a narrow update. OpenAI has pulled together its best recent work in coding, reasoning, and computer use into a single model, expanded the context window significantly, and improved how the model handles being redirected mid-task. For developers and professional users who can afford the subscription, it looks like a meaningful step forward — particularly for vision, coding, and complex document work.

The gaps are real, though. Creative writers are frustrated with tighter content restrictions, and design output remains unreliable without careful prompting. Whether OpenAI addresses these issues in a future point release remains to be seen. For now, GPT-5.4 sets a new baseline for what to expect from the GPT-5 line.