OpenRouter Hunter Alpha and Healer Alpha: Testing Two Mystery Stealth Models

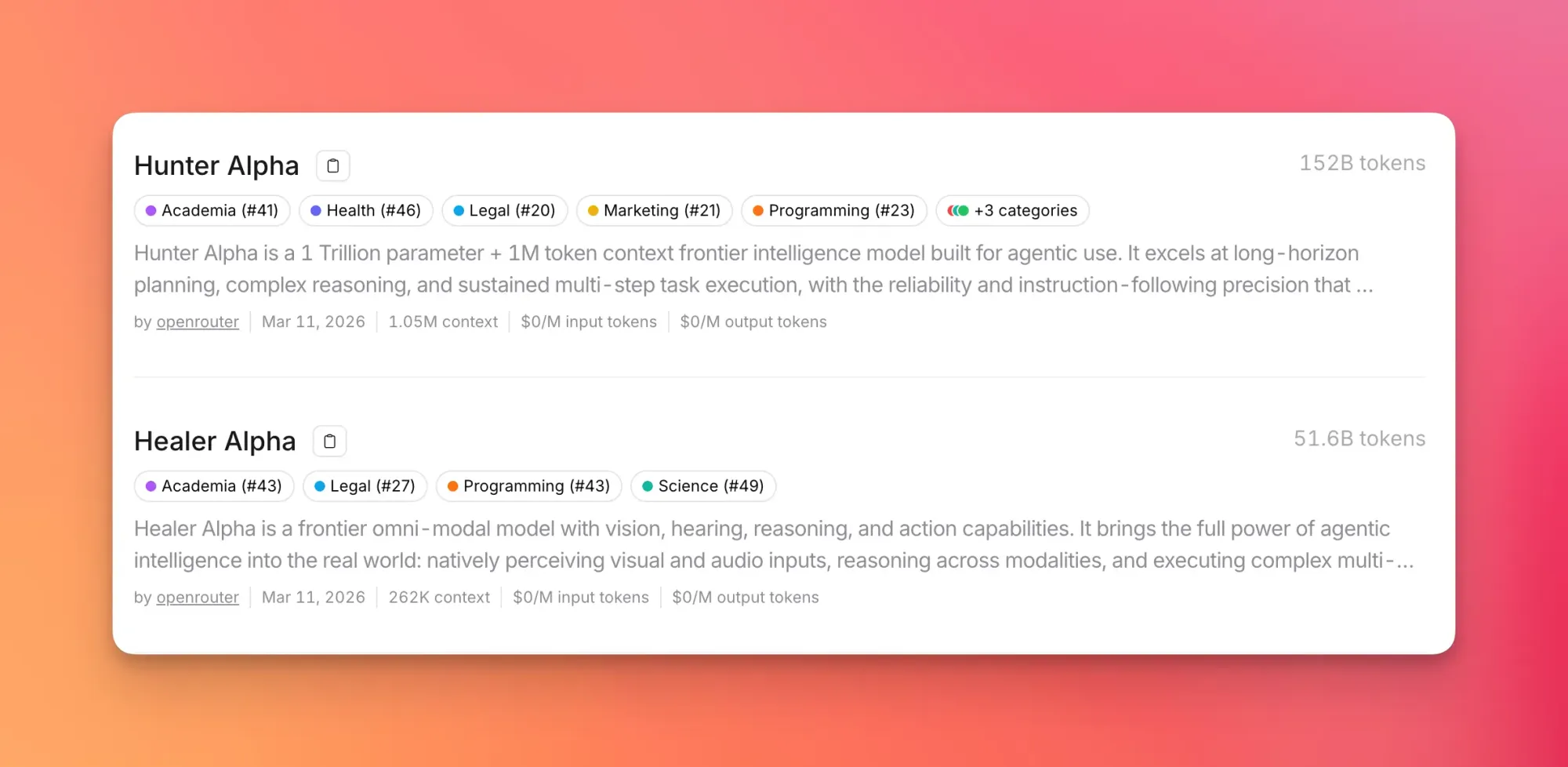

OpenRouter dropped two free stealth models: Hunter Alpha (1T params, 1M context) and Healer Alpha (omnimodal, 262K context). Here's what testing actually revealed.

OpenRouter quietly dropped two unnamed-provider models. No lab announcement, no benchmark sheet, no press release. Just two new entries in the model list: Hunter Alpha and Healer Alpha. Both free. Both of unknown origin. And the community immediately started tearing them apart.

Two new Stealth Models are live now!

— OpenRouter (@OpenRouter) March 11, 2026

- Hunter Alpha: 1T-parameter model with 1M context built for agentic workflows, long-horizon tasks, and serious tool use.

- Healer Alpha: multimodal model combining strong image, video, and audio understanding with real agentic execution. pic.twitter.com/vR2urIQ28w

What Each Model Claims to Be

Hunter Alpha is listed as a 1-trillion-parameter model with a 1 million token context window. Its description specifically positions it for agentic workflows, long-horizon tasks, and tool use. It outputs at approximately 48 tokens/second — noticeably slower than most frontier models, which is consistent with its claimed scale.

Healer Alpha is the smaller, faster sibling. It's an omnimodal model accepting image, video, and audio inputs, with a 262K context window and output speed around 93 tokens/second. Both models share a knowledge cutoff of May 2025 and, when probed directly, claim to be "created by a small team focused on AGI."

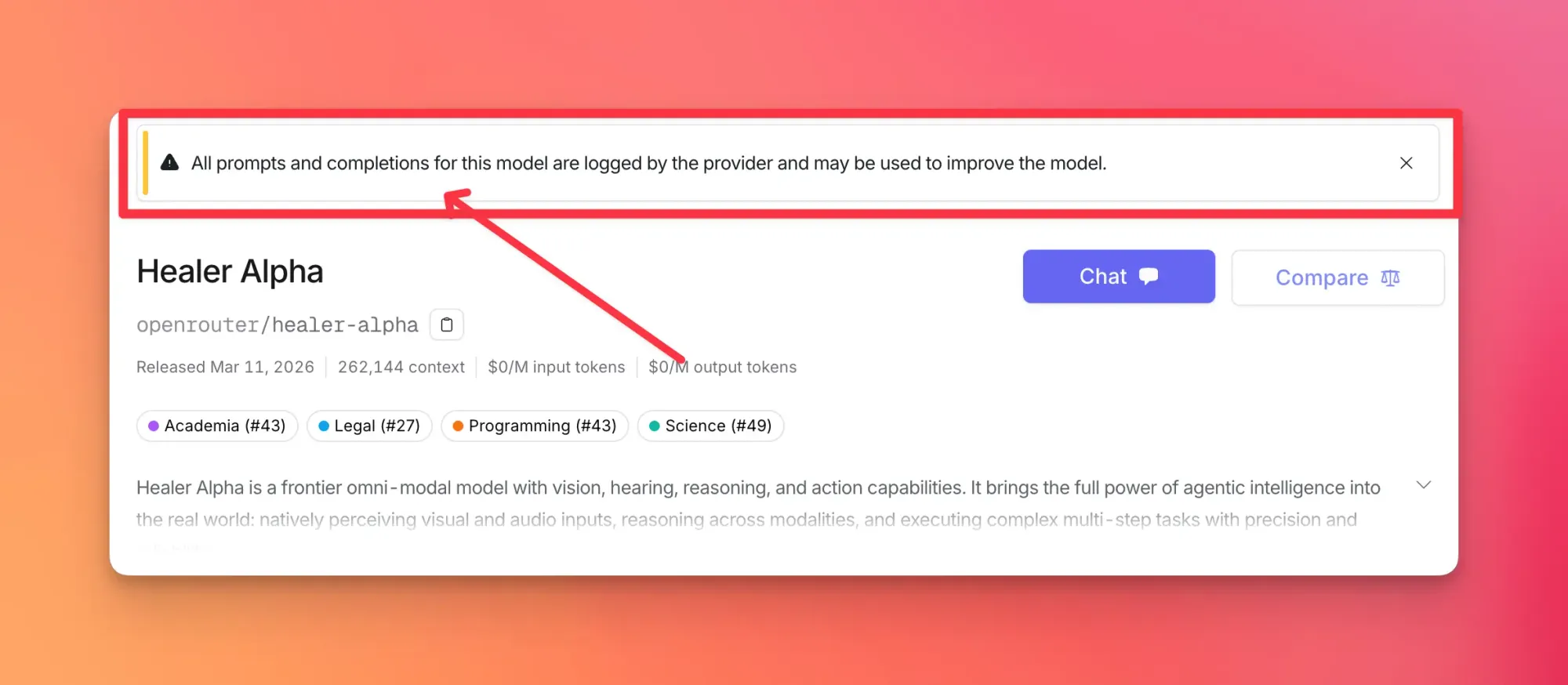

The Free-But-Not-Free Catch

Both models are currently free to use on OpenRouter. The cost is your data: your prompts will be used for training, as flagged by community members shortly after launch. OpenRouter hasn't hidden this, but it's easy to miss if you're just clicking through to test something.

For anyone working with proprietary code, sensitive business logic, or anything you'd rather not feed into an unknown lab's training pipeline — skip these for now. For public prototyping and exploration, the tradeoff is more defensible.

What Testing Actually Found

The initial reaction from the community was not uniformly positive. Basic arithmetic failures were reported within hours. Multiple testers flagged that neither model can reliably decode twice-base64-encoded strings. These aren't edge-case failures — they're the kind of thing that disqualifies a model from most serious pipelines.

Hunter Alpha and Healer Alpha on OpenRouter:

— Lisan al Gaib (@scaling01) March 11, 2026

- Hunter Alpha has Claude psychosis

- Healer Alpha says it's built by Xiaomi

- they are definitely chinese models

- Hunter Alpha responds much slower, like half the tks/s

- both models are completely SVG benchmaxxed

- both fail…

The "SVG benchmaxxed" observation is worth unpacking. Both models produce visually impressive SVG output — clean rendering, particle effects, polished UI elements. But community testers believe this aesthetic performance was optimized specifically for visual benchmarks at the expense of underlying reasoning. Pretty outputs masking weak fundamentals is a known failure mode in models trained on coding benchmarks without deeper evaluations.

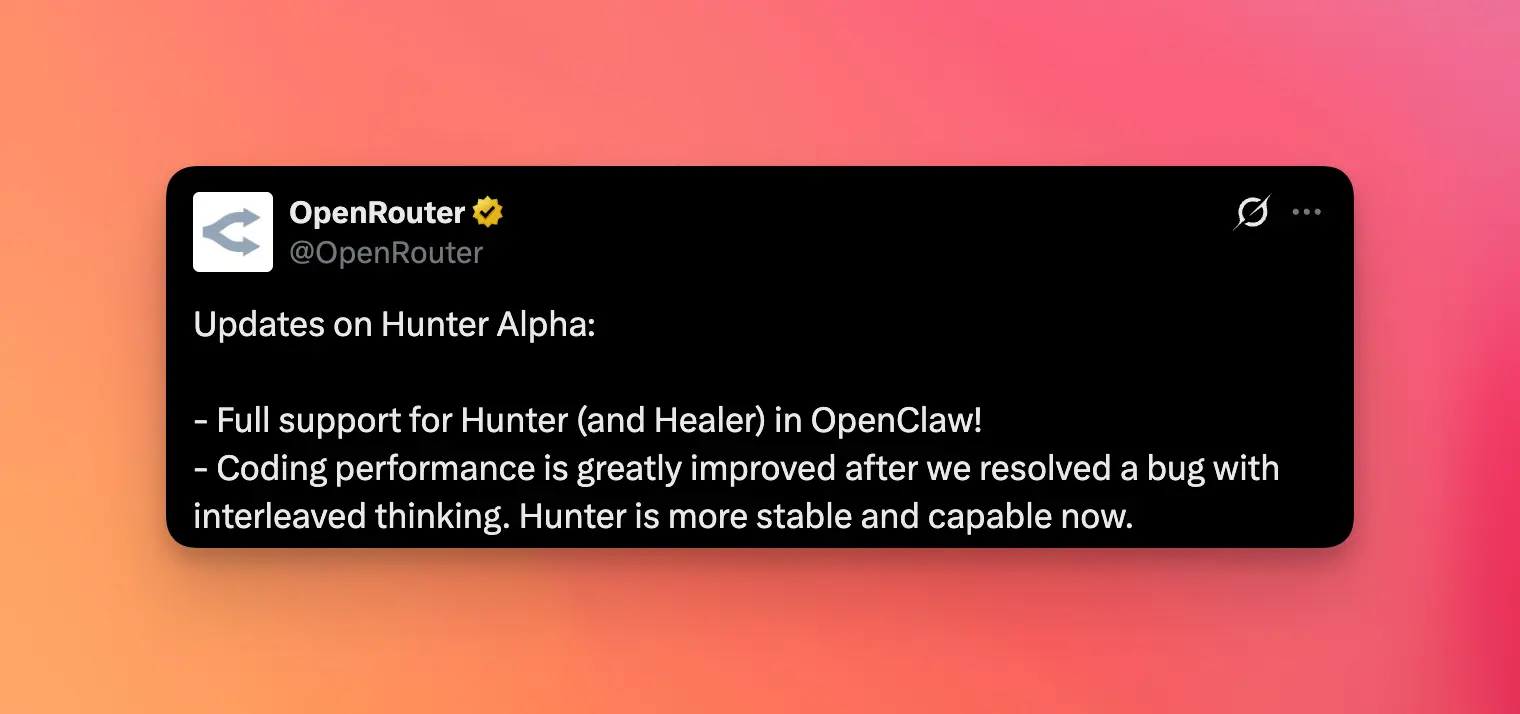

Hunter Alpha also hit a known bug at launch. OpenRouter confirmed an issue with interleaved thinking that was degrading its coding performance. A fix was deployed, with OpenRouter describing the model as "more stable and capable" post-patch.

The Origin Problem

Nobody knows who built these. The anonymous OpenRouter provider serving Hunter and Healer Alpha is the same provider that previously served PonyAlpha — which was later confirmed to be Zhipu AI's GLM-5. That connection makes Zhipu AI the leading suspect, with the models potentially being next-generation GLM flagship and vision-language releases.

The DeepSeek V4 hypothesis circulated early and loudly — enough to dominate headlines — but has largely been walked back. Tester @cheatyyyy, who initially floated the DeepSeek angle, later updated to say the SVG and frontend design quality doesn't match what they'd expect from DeepSeek or Kimi/Minimax.

Deepseek V4 and V4 Lite may currently be in testing as stealth models on OpenRouter.

— cheaty (@cheatyyyy) March 11, 2026

Hunter Alpha seems to be V4 (1M context, it says 1 Trillion params in the description) and Healer Alpha seems to be another multimodal variant of some sort, but it's currently down

Update: I do… pic.twitter.com/GyjCeBOmlu

Then there's Healer Alpha's Xiaomi self-identification. When directly queried about its origins, Healer Alpha reportedly stated it was built by Xiaomi. Self-reports from stealth models are unreliable by design — the model's identity prompt can be set to anything — but it's a data point that's hard to entirely ignore.

The censorship test adds another wrinkle. Tester @mranti uses politically sensitive questions as a proxy to detect Chinese-origin models — their reasoning being that Chinese models typically fail or deflect on certain topics. Both Hunter and Healer Alpha reportedly passed these tests, which either means they're not Chinese-origin models, or they've been specifically tuned to pass exactly this kind of probe.

The actual origin remains unconfirmed. If you're curious about the DeepSeek V4 angle specifically, we've covered what's actually known about DeepSeek V4 separately.

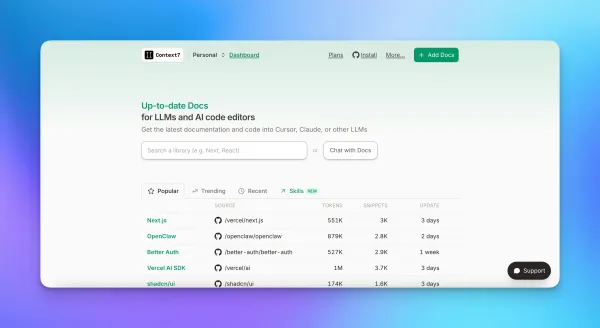

OpenClaw Integration

The practical enthusiasm comes from agentic builders. OpenClaw v2026.3.11 shipped with full support for both Hunter and Healer Alpha, alongside Gemini Embedding 2 memory integration and security hardening. That's free 1M context feeding directly into an open-source agentic platform — no setup friction, no API cost.

Nova release do OpenClaw — a plataforma que roda o Major 24/7

— CV.YH (@0xCVYH) March 12, 2026

O que tem de novo:

→ Hunter & Healer Alpha (1M contexto, gratis via OpenRouter)

→ GPT 5.4 parou de travar no meio do raciocinio

→ Gemini Embedding 2 pra memoria

→ OpenCode com suporte a Go

→ Sprint de seguranca… pic.twitter.com/p2Sj0wKLqz

For teams already building on OpenClaw or the MCP ecosystem, the integration is genuinely useful for prototyping. The free 1M context window is real, and for long-document tasks or multi-step agent chains, that's worth experimenting with — provided you're not putting anything sensitive through it. If you're building agentic workflows, our guide to AI agent skills covers some relevant patterns.

Who Should Use These Right Now

Good fit: AI engineers prototyping long-context or agentic tasks who want to stress-test 1M context without burning API credits. OpenClaw users who want zero-friction access to a large-context model. Anyone curious enough to run their own fingerprinting tests — there's an open-source tokenizer fingerprinting script the community has been using for exactly this.

Bad fit: Production teams. Anyone handling data they don't want in a training set. Teams that need verified arithmetic, reliable multi-step reasoning, or known model provenance for compliance. The math failures and the interleaved thinking bug — even post-fix — suggest these are models in active development, not stable production-ready releases.

The origin will eventually leak — it always does. Whether what gets confirmed matches the specs and the hype is the question worth waiting on.

You can try out these models for free right now.

Hunter Alpha - https://openrouter.ai/openrouter/hunter-alpha

Healer Alpha - https://openrouter.ai/openrouter/healer-alpha